When visiting my grandpa a few months after his open-heart surgery, the elephant in the room was that he literally saw elephants in the dining room. After a 4-year struggle through dementia, I was relieved when my grandpa’s journey through the fog was finally over. But even through this period of his life, which was often frustrating for him and everyone around him, he had his memories.

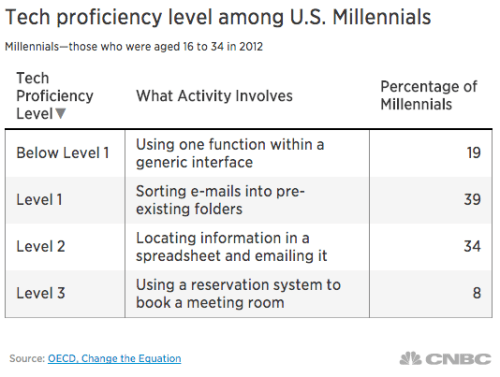

Nicholas Carr’s 9th chapter of The Shallows posits our exponential reliance on the Internet because of overstimulation, which may have a startling negative impact on our ability to retain memory. (The irony is that mobile apps to improve memory retention are very popular.)

The Pew Research Center ran an interesting article compiling studies that state Americans aren’t dying like we used to. In fact we’re living longer, “[b]ut the downside of living longer is the higher rates of dementia, senility and Alzheimer’s in the population, which are also more costly. In 2010, the Centers for Disease Control and Prevention documented 180,021 such cases compared with just 293 such cases in 1968.”

If Carr’s research about our decline in memory retention is true, what will the future hold for a generation of Americans that are living longer, but who may face the same, if not worsened chances, for living with memory-related diseases?

![social-lights[3]](https://strategicallycommunicating.com/wp-content/uploads/2015/11/social-lights31.jpg?w=500)